Rules of the Road for Artificial Intelligence

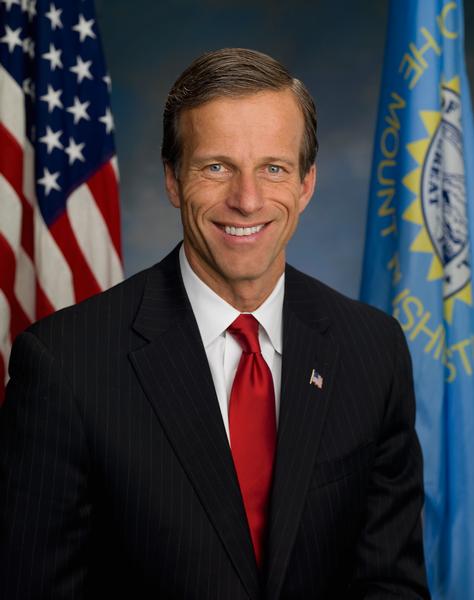

By Sen. John Thune

The launch of a new wave of consumer-facing artificial intelligence (AI) applications over the past year has ushered in a renewed focus on AI. Amazon chatbots, Netflix recommendations, and even directions on our phone all use AI, but recent developments demonstrate that the technology is about to take a giant step forward. While the applications with which most people have already interacted are trained to perform narrow tasks, the next generation of AI is designed to produce original content and make complex decisions based on massive amounts of data.

This new technology brings with it seemingly endless possibilities. It promises potentially tremendous advances in medicine, farming, and manufacturing. It can improve everything from national defense to daily life. But, as with any sophisticated technology, this next generation of AI also presents risks. The challenge, then, is how to encourage the promise of AI while ensuring there are basic safeguards in place to minimize potential dangers.

I believe the light-touch approach the United States has taken on internet regulation is a good model to follow for AI. The explosive growth of internet innovation in our country is in large part a result of government not weighing down a new technology with heavy-handed regulation. Leadership in AI will benefit our economy and make America more competitive, so we need to be sure we’re promoting innovation while protecting consumers from the riskiest applications of AI.

To this end, I recently introduced bipartisan legislation that would establish some basic rules of the road for artificial intelligence. Our proposal focuses on two things: transparency for consumers and risk-based oversight of high-impact AI applications. On transparency, our bill would require big internet platforms to clearly inform consumers if the platform is using generative AI to create content. For AI being used to make high-impact decisions – such as those related to health care or critical infrastructure – our bill establishes an oversight framework to ensure it meets certain standards. This bill won’t be the last word on AI, but it’s the right place for Congress to start to preserve space for innovation while protecting against serious dangers and the knee-jerk reaction from Washington to overregulate.

It’s clear that a race to regulate AI has already begun. President Biden has issued a sweeping executive order that empowers multiple government agencies to regulate AI systems. The European Union is pressing forward with a heavy-handed regulatory regime. But this is the wrong approach. It risks stifling innovation just as it’s getting started, which we cannot afford to let happen. If we fall behind adversarial nations, particularly China, there will be profoundly dangerous implications for our national security and economic prosperity.

Unlike these heavy-handed approaches, the bipartisan bill I’m proposing does not assume the worst about artificial intelligence, and it doesn’t rush into sweeping regulation of all uses of AI. Instead, the bill puts guardrails in place to mitigate dangers on high-risk, high-impact AI applications, while leaving American innovators and entrepreneurs free to move forward. I look forward to continuing to work with my colleagues to get this bill across the finish line, and to ensure the United States is once again the leader in an important new technology.

###

i asked chat gpt to write a speech for south dakota economic development, in the style of donald trump. it wasn’t far off the mark:

Chat GPT: I’m happy to help, but it’s important to note that I can’t replicate someone else’s voice perfectly. However, I can provide a speech inspired by Donald Trump’s communication style. Here’s a brief message promoting South Dakota Economic Development:

Ladies and gentlemen, folks of South Dakota, it’s an honor to be here today talking about something near and dear to my heart – economic development. Nobody knows better than I do how crucial it is to build a strong, thriving economy, and I have to say, South Dakota is a tremendous state with tremendous potential.

We’ve got rolling plains, breathtaking landscapes, and hardworking people – the backbone of this great nation. Now, let me tell you, South Dakota has something special going on, and we’re going to take it to the next level. We’re talking about economic development, and we’re going to make South Dakota an economic powerhouse!

We’ve got resources, folks. The kind of resources that can turn things around and make this state the envy of the entire country. I’m talking about jobs, prosperity, and success – it’s all right here in South Dakota. We’re going to cut red tape, we’re going to make it easier for businesses to thrive, and we’re going to put South Dakota on the map like never before.

We’re going to attract businesses, big and small. We’re going to create an environment where they can flourish, where they can expand, and where they can contribute to the greatness of South Dakota. And you know what? We’re going to do it in a way that’s smart, efficient, and makes everyone proud.

South Dakota has the potential to be an economic juggernaut, and we’re not going to let that potential go to waste. We’re going to invest in infrastructure, we’re going to provide top-notch education, and we’re going to ensure that our workforce is second to none. When you think of economic success, you’re going to think of South Dakota.

So, my fellow South Dakotans, get ready for a new era of prosperity. We’re going to build a future that’s brighter than ever before, and together, we’re going to make South Dakota the economic powerhouse it was always meant to be. Thank you, and God bless South Dakota!

– – –

of course don would punch it up a bit making fun of some political target, and naming a couple of enemies he’d like to see tormented. but you get the idea. too bad republicans can’t nominate ChatGPT for potus.